This article is part of a series on the future of instructional design in the age of GenAI. The series explores how instructional designers can move beyond ad hoc prompting toward a more disciplined, challenge-based human–AI working method.

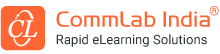

The current debate about GenAI in instructional design is still too shallow.

Too much of it revolves around one question: Will AI replace instructional designers?

That is not the most important question. The more important one is this:

What happens when instructional designers start relying on AI in ways that weaken their own thinking?

This article explores the hidden risks of GenAI in instructional design, including reduced independent thinking, the trade-off between speed and capability, and how instructional designers can use AI as a thinking partner rather than a shortcut.

Table Of Content

- Does AI Assistance Reduce Independent Thinking?

- Is the Problem AI or How We Use It?

- What Skills Define Good Instructional Design?

- What Is Cognitive Dependence in Instructional Design?

- Does GenAI Improve Speed at the Cost of Capability?

- Can GenAI Make Poor Instructional Design Look Good?

- What Is the Right Way to Use GenAI in Instructional Design?

- Final Thoughts

Does AI Assistance Reduce Independent Thinking?

A recent randomized study involving 1,222 participants should give L&D professionals pause. Across mathematical reasoning and reading-comprehension tasks, the researchers found that AI assistance improved short-term performance but reduced persistence and hurt later independent performance once the AI was removed. These effects appeared after only about 10 minutes of AI-assisted interaction.

The numbers are not trivial. In one experiment, the AI-assisted group’s unassisted solve rate dropped to 57%, compared with 73% in the control group. In another, it was 71% versus 77%. In the reading-comprehension experiment, it was 76% versus 89%. The strongest negative effects were associated with using AI for direct answers, while users who relied more on hints and clarifications did not show the same degree of impairment.

Is the Problem AI or How We Use It?

That distinction matters. Because the issue is not AI itself. The issue is how AI is used.

If GenAI becomes a shortcut to immediate answers, it may slowly train people out of the very mental habits that make them good at complex work. The study’s authors argue that current AI systems are optimized for instant, complete responses rather than for supporting long-term competence, and they suggest this may condition people to expect immediate resolution rather than work through difficulty.

What Skills Define Good Instructional Design?

For instructional designers, that should hit close to home.

Good instructional design is not a matter of producing words on a screen. It requires judgment. It requires the ability to look at messy SME material, identify what matters, separate signal from noise, structure learning logically, define what performance should change, and design assessments that test understanding rather than recall. None of that is mechanical. And none of it gets stronger when the designer gets used to asking AI for the first answer and then moving on.

That is the real risk.

What Is Cognitive Dependence in Instructional Design?

By that, I mean a gradual weakening of the designer’s willingness and ability to do the hard parts of thinking independently. Staying with ambiguity. Pushing through confusion. Testing whether an objective is really performance-based. Questioning whether a scenario is authentic. Seeing that an assessment item is technically correct but instructionally weak. These are not glamorous skills. But they are the essence of good design.

And they are built through effort.

Not replacement. Not even poor output, though that is part of it. The deeper risk is cognitive dependence.

Does GenAI Improve Speed at the Cost of Capability?

This is where the GenAI conversation often goes wrong in L&D. We tend to celebrate speed too quickly. Faster SME summary. Faster storyboard draft. Faster question generation. Faster narration. Faster visuals. Fair enough. Speed matters. But if speed comes from outsourcing the struggle that produces understanding, then we may be gaining efficiency while quietly eroding capability.

That would be a bad trade.

Can GenAI Make Poor Instructional Design Look Good?

Instructional design is one of those professions where weak thinking can hide behind polished output. AI can produce something that looks finished long before it is actually sound. A course can appear organized but still lack instructional logic. A learning objective can sound professional but still fail to drive performance. A scenario can look realistic but still test nothing meaningful. In other words, GenAI can make mediocre design look more convincing.

What Is the Right Way to Use GenAI in Instructional Design?

In my view, GenAI in Learning and Development (L&D) should not be treated primarily as a content machine. It should be treated as a thinking partner. Sometimes helpful. Sometimes challenging. Sometimes useful in structuring. Sometimes useful in critique. But never the unquestioned first and final designer.

This is also why prompting, by itself, is not enough.

What most instructional designers need is not just a better set of commands. They need a better human-AI working method. One that makes room for assistance but also preserves friction. One that allows AI to simplify SME content, suggest structures, propose assessments, and draft narration, while still forcing the designer to review, justify, refine, reject, and improve.

That may sound slower. In some moments, it is. But over time, it is probably the only sustainable model. Because organizations do not merely need faster output from their learning teams. They need stronger design capability inside those teams. And that capability will not survive if every hard part of the work is handed over to AI.

Final Thoughts

I do not think the main danger of GenAI in instructional design is that it will eliminate the profession. I think the bigger danger is subtler. If we use it poorly, it may leave the profession intact while hollowing out some of the thinking that makes it valuable.

That is why the future of instructional design will not belong to the people who use AI the most casually. It will belong to those who learn how to use it with discipline.

Reference: The findings above come from AI Assistance Reduces Persistence and Hurts Independent Performance, a 2026 randomized study by Grace Liu, Brian Christian, Tsvetomira Dumbalska, Michiel A. Bakker, and Rachit Dubey. The study reports causal evidence that AI assistance can reduce persistence and impair later unassisted performance across math and reading tasks, while also noting differences based on how people use the tool.

Next in the series: Why Prompting Is Not Enough: Instructional Designers Need a Method, Not Just Better Commands.